The Story Point Illusion

Why your team's estimates are hiding disagreement instead of resolving it

“Story points aren’t about the numbers. They trigger conversations about effort and complexity.”

I hear this defence constantly. It sounds reasonable on the surface. Almost wise. But I want you to think carefully about what it’s actually saying, because underneath the reasonable-sounding words lies a significant admission.

If the real value of story points is the conversation they trigger, why do you need story points to have that conversation?

You could just talk about the work. Directly. Without the numerical theatre. Without the planning poker cards. Without the endless debates about whether this piece of work is a 3 or a 5 or maybe an 8.

The fact that we need to dress up “having a conversation about work” in estimation clothing should make us suspicious. It suggests that something else is going on. And that something else is where the danger lies.

Let me be clear about what I mean when I say story points are dangerous. I don’t mean they’re mildly unhelpful or slightly inefficient. I mean they actively harm teams, damage trust, and create dysfunction. They do this in ways that are subtle enough to go unnoticed for years, which makes them more dangerous, not less.

Story points don’t measure anything real

When you measure something in metres, everyone agrees what a metre is. When you measure something in kilograms, we have a shared understanding of the unit. Story points don’t work this way. A “point” means something different to every team, and often means something different to every person on the same team.

Some people think about points as effort. How much work will this take? Some people think about points as complexity. How many moving parts are involved? Some people think about points as risk. How likely is this to go wrong? Some people think about points as uncertainty. How much do we not know?

These are all different things. Effort and complexity are related but not the same. A task can be simple but effortful, like copying a thousand records by hand. A task can be complex but quick, like making a small change to a critical algorithm where you need to understand the whole system but the actual change is tiny.

When your team sits down to estimate, each person is measuring a different thing with the same number. You’re using the same scale to weigh apples, measure distances, and count sheep. Then you’re surprised when the numbers don’t mean anything useful.

Agreement on a number creates an illusion of shared understanding

This problem is more insidious than the first. Imagine your team is estimating a story. After some discussion, everyone holds up a 5. Success, right? You’ve reached consensus. The process worked.

But did it?

Person A is thinking about the database changes required. They’ve estimated a 5 because they know the schema is messy and migrations are risky.

Person B is thinking about the front-end work. They’ve estimated a 5 because there are several screens to update and the design isn’t finalised.

Person C is thinking about the integration with the external API. They’ve estimated a 5 because they’ve never worked with this API before and the documentation looks sparse.

Person D is thinking about testing. They’ve estimated a 5 because this feature touches several existing workflows and regression testing will be extensive.

Everyone said 5. Everyone had completely different reasons. They’re not estimating the same work. They’re not even thinking about the same aspects of the work.

The number created false consensus. It let everyone believe they were aligned when they weren’t. And this false consensus is worse than open disagreement, because open disagreement gets resolved. False consensus just waits quietly until it explodes during implementation.

If Person A starts working on this story, they might ignore the front-end complexity that Person B was worried about. If Person C picks it up, they might not realise the database concerns that Person A had in mind. The “shared estimate” shared nothing except a digit.

This is what I mean when I say story points are dangerous. They create a feeling of alignment without actual alignment. They let teams believe they’ve communicated when they haven’t. They substitute a ritual for real understanding.

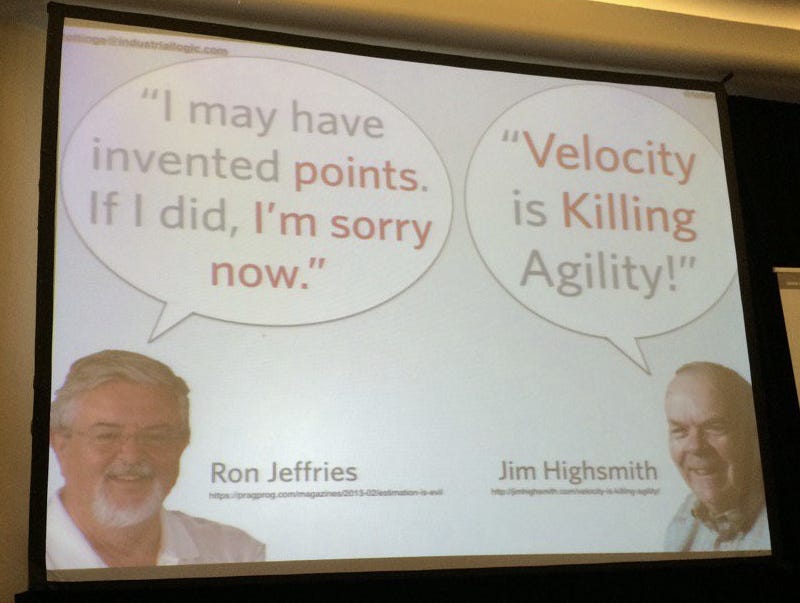

The retreat to “valuable conversations”

Now let’s talk about what happens when teams try to defend story points.

The most common defence is the one I started with: story points trigger valuable conversations. But notice what this defence concedes. It concedes that the points themselves aren’t valuable. It concedes that accuracy doesn’t matter. It concedes that the numbers are essentially arbitrary.

If someone told you that their weighing scale was completely inaccurate but it was still valuable because stepping on it reminded them to think about their health, you’d suggest they just think about their health directly and throw away the broken scale.

The “triggers conversations” defence is what people say when they can no longer defend the thing on its own merits. It’s a retreat to secondary benefits. And those secondary benefits can be achieved more directly without the harmful primary effects.

If you want to understand complexity, ask “what makes this complicated?” directly.

If you want to surface risks, ask “what could go wrong?” directly.

If you want to check whether the team has shared understanding, ask “how would we approach this?” directly.

If you want to know if everyone is thinking about the same work, ask “what do you think this story involves?” directly.

These questions actually achieve the supposed goals of estimation. They surface different perspectives. They reveal hidden assumptions. They create genuine shared understanding rather than the illusion of it.

And crucially, they don’t produce a number that will later be misused.

Story points get weaponised

Because here’s the third danger of story points: they get weaponised.

No matter how many times you tell management that story points aren’t comparable across teams, they will compare them across teams. No matter how many times you explain that velocity isn’t a productivity measure, it will be used as a productivity measure. No matter how many times you insist that points shouldn’t be converted to hours, someone will create a conversion formula.

Story points create numbers. Organisations love numbers. Numbers go into spreadsheets. Spreadsheets go into reports. Reports go to executives. Executives make decisions based on those numbers.

And now your meaningless, arbitrary, inconsistent, incomparable numbers are driving organisational decisions. Teams get pressured to increase velocity. Developers learn to inflate estimates so they can “deliver more points.” Gaming begins. Trust erodes.

You might say this is a misuse of story points, not a problem with story points themselves. But this misuse is inevitable. It happens in nearly every organisation that adopts story points. If a tool is consistently misused across thousands of different organisations with different cultures and different people, the problem is the tool, not the users.

A good tool makes the right thing easy and the wrong thing hard. Story points make the wrong thing easy. They produce numbers that look meaningful, invite comparison, and beg to be aggregated. They’re practically designed to be misused.

The myth of improving at estimation

Let’s look at another defence: story points help teams improve at estimation over time.

This sounds plausible. Practice makes perfect, right? But there’s a hidden assumption here that deserves examination. The assumption is that there’s a stable, learnable skill called “estimation” that teams can get better at.

In reality, every piece of work is different. The factors that made your last story take longer than expected are probably not the factors that will affect your next story. Software development is not like manufacturing widgets, where you can measure cycle time and optimise a repeatable process. Each story involves different code, different requirements, different unknowns.

Teams that track their estimation accuracy over time often find that it doesn’t improve. Or it improves for a while and then degrades. Or it varies randomly with no clear trend. This isn’t because the teams are bad at learning. It’s because there isn’t a stable underlying skill to learn.

What teams actually get better at is playing the estimation game. They learn what numbers the organisation wants to hear. They learn to pad estimates to create safety margin. They learn to break work into smaller pieces not because smaller pieces are better but because smaller estimates are less scrutinised.

The improvement is an illusion. Or worse, the improvement is real but it’s improvement at gaming the system rather than improvement at understanding work.

The false promise of avoiding false precision

Here’s another defence I hear: story points are better than time-based estimates because they avoid the trap of false precision.

This defence has some truth in it. Time-based estimates do create false precision. Saying “this will take 3.5 days” implies a level of accuracy that doesn’t exist.

But story points don’t solve this problem. They just move it. Instead of false precision about time, you get false precision about relative size. Saying “this is a 5” implies that you know how this work compares to other work, that you understand the scope well enough to place it on a scale, that the number means something.

And then the false precision comes back anyway, because most organisations convert velocity into time-based forecasts. If you complete 30 points per sprint and your backlog has 300 points, you forecast 10 sprints. The time-based precision you tried to avoid has snuck back in through the back door.

You haven’t eliminated false precision. You’ve just added a layer of indirection that makes the false precision harder to see and challenge.

What story points do to how teams think

Now I want to address the deepest problem with story points, which is what they do to the way teams think about work.

Story points encourage teams to think about work as a quantity to be estimated rather than a problem to be understood.

When you approach work through the lens of estimation, you’re asking “how big is this?” That’s a question about the work as a fixed object, something with a predetermined size that you’re trying to measure.

But software development doesn’t work that way. The work isn’t fixed. The scope isn’t predetermined. The size emerges from how you approach the problem, what trade-offs you make, what you discover along the way.

When teams approach work through the lens of understanding, they ask different questions. What are we trying to achieve? What’s the simplest thing that could work? What do we need to learn? What could we defer?

These questions lead to better outcomes because they engage with the work as something to be shaped rather than something to be measured. They open up possibilities rather than closing them down into a single number.

I’ve watched teams spend an hour debating whether a story is a 5 or an 8. That’s an hour they could have spent actually understanding the work. Actually talking about the approach. Actually identifying risks and unknowns. Actually building shared mental models.

The estimation ritual crowds out the valuable activities. It substitutes a proxy for the real thing. And because the proxy feels productive, feels like work, feels like alignment, teams don’t notice what they’re missing.

What happens when teams stop estimating

Let me talk about what happens when teams stop using story points.

The first thing that happens is fear. Managers worry about losing visibility. They ask how they’ll know when things will be done. They ask how they’ll track progress. They ask how they’ll compare teams.

These fears are worth examining. What visibility did story points actually provide? If the numbers were arbitrary and inconsistent and gameable, what were managers really seeing? They were seeing a theatrical performance of estimation, not a window into reality.

When teams stop estimating and start focusing on flow, something interesting happens. They start measuring things that actually matter. How long do items spend in progress? Where do items get stuck? How often do items get blocked? What’s the cycle time from start to finish?

These measurements are grounded in reality. They’re not opinions or guesses. They’re observations of what actually happened. And they’re much harder to game because they’re based on timestamps, not feelings.

Teams that focus on flow also tend to break work into smaller pieces, not because smaller estimates are safer, but because smaller pieces flow better. They hit problems earlier. They learn faster. They deliver value sooner.

This is a genuine improvement in the way teams work, not an improvement in the way teams estimate. And it happens precisely because teams stopped spending energy on estimation and redirected that energy toward actually improving their work.

What to do instead

I’m not saying all estimation is worthless. Sometimes you need to make decisions that require rough forecasts. Should we start this project? Can we deliver by this deadline? How should we staff this team?

But these decisions don’t require story points. They require honest conversations about uncertainty. They require looking at historical data. They require acknowledging what you don’t know.

A team that says “based on how similar work has gone in the past, this might take two to four weeks, but there are significant unknowns around the external integration” is providing more useful information than a team that says “we estimated this at 23 points and our velocity is 8 points per sprint.”

The first statement is honest about uncertainty. The second creates false precision that will be treated as a commitment.

Have the conversations you actually want to have

Let me return to where I started. “Story points trigger conversations about effort and complexity.”

If you find yourself defending a practice by pointing to its side effects rather than its primary purpose, it’s worth asking whether you should replace that practice with something that achieves the side effects directly.

If you want conversations about effort, have conversations about effort.

If you want conversations about complexity, have conversations about complexity.

If you want shared understanding, build shared understanding through discussion, collaboration, and working together on the actual work.

You don’t need a numerical ritual to have good conversations. The ritual gets in the way more often than it helps. The numbers create more problems than they solve.

Stop estimating. Start understanding. Your team will be better for it.